Live-streaming has emerged in recent years as one of the most influential and least regulated media environments online. Unlike pre-recorded video, live-streams unfold in real time, making spontaneous incidents of antisemitic hate speech and fringe personalities particularly difficult to regulate. The term “live-streaming” refers to the act of broadcasting content to a social platform, with reaction and content consumption occurring in real time. Anything the viewer sees is happening live, with an added benefit for fan interactions with content creators via their “live chat.”

It’s believed that about a fourth of all internet users regularly watch a live-stream, with 8.5 billion hours of content consumed in 2024. Live-streaming has the potential to create conditions for antisemitism to spread quickly, and current platform moderation systems are not equipped to stop it consistently.

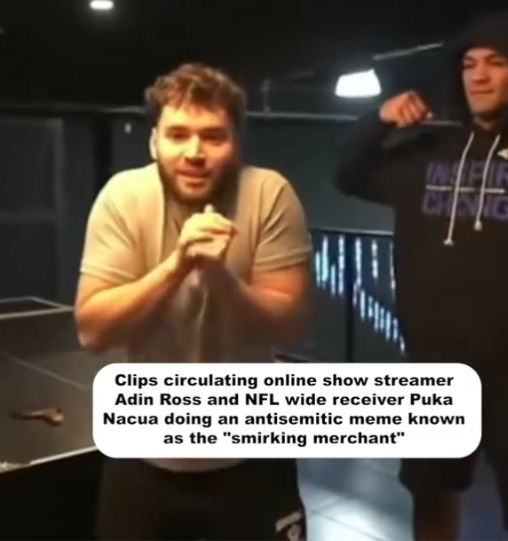

While gaming content is the backbone of most streaming platforms, the most consequential space for hate speech is the “IRL” (In Real Life) category. IRL streamers broadcast themselves in a variety of ways, engaging in everyday activities or sitting down for open commentary, cultivating a parasocial intimacy with their audiences. At times, this broadcasting content can lead to inflammatory or hateful expressions by either the creator or their fans.

To understand how weak moderation enables antisemitism in live-streaming, it is important to examine three key areas:

- How antisemitic rhetoric spreads across the three major live-streaming platforms: Twitch, Kick, and Rumble

- How each platform’s moderation standards, or lack thereof, allow hate speech to persist

- What the streaming industry needs to do to address antisemitism more effectively.

Twitch: The Most Popular, But Needs More Regulation

Twitch remains the dominant live-streaming platform, with approximately 35 million daily users and content spanning gaming, politics, and cultural commentary. It also maintains the most stringent Terms of Service of the major streaming platforms, yet enforcement remains inconsistent.

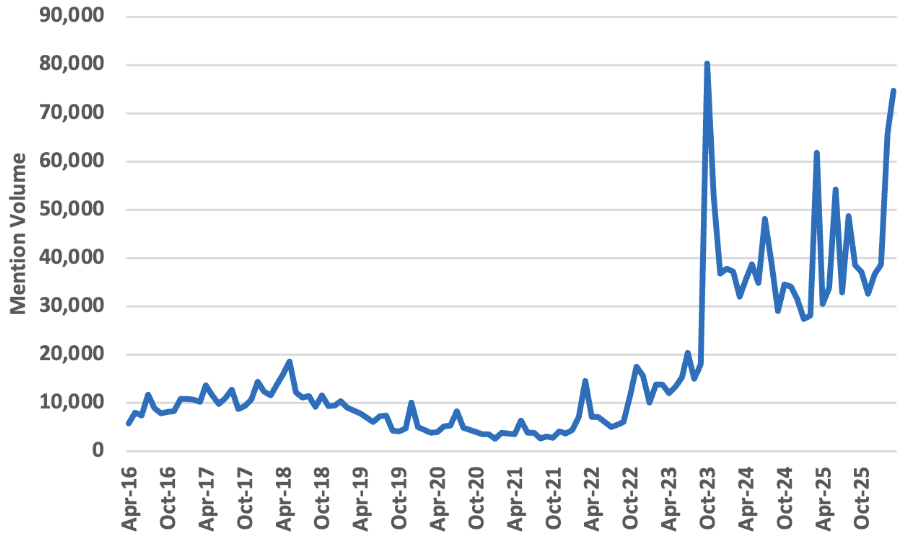

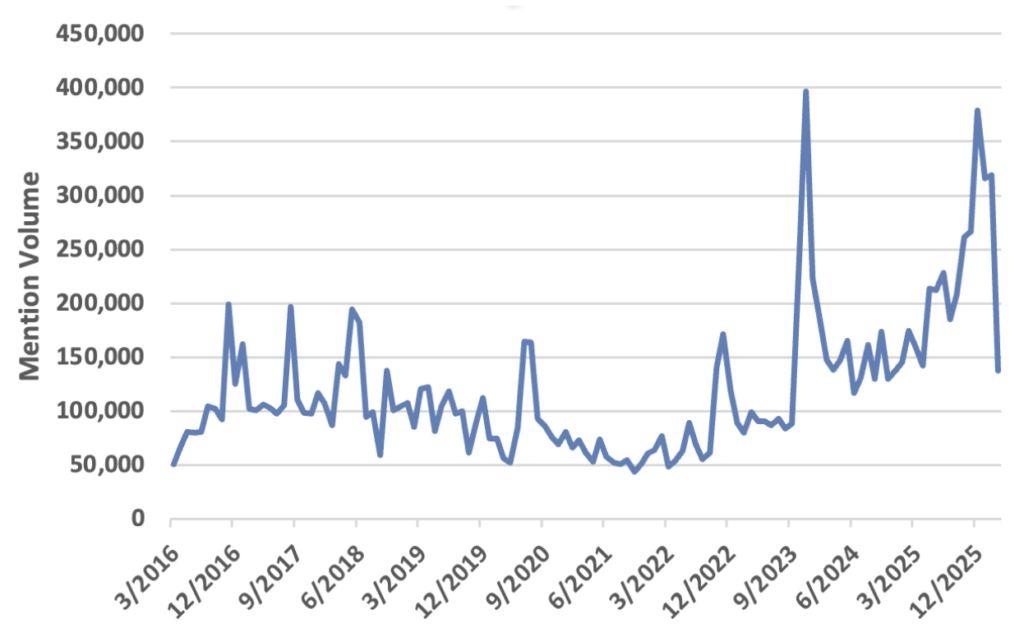

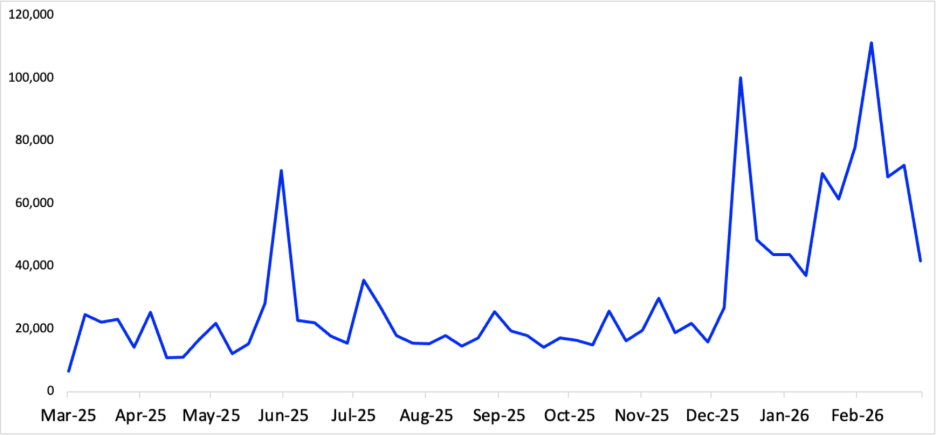

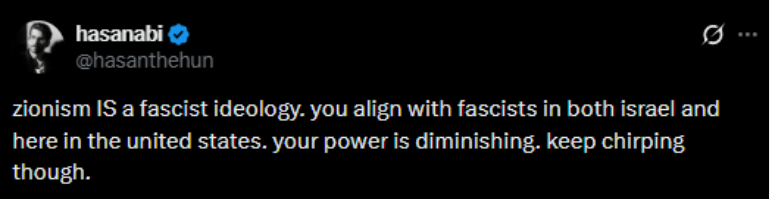

No case illustrates this tension more clearly than that of Hasan Piker, considered one of the most prominent left-wing political commentators on the platform. In our Command Center we looked at how many times Hasan Piker’s name appeared in social media conversations related to Jewish culture and related issues, and he has been directly mentioned over 281,000 times since October 7, 2023. While he initially condemned the October 7 attacks, his position has since shifted: he has expressed increasingly pro-Hamas rhetoric, downplayed the scale and nature of the violence, and amplified content from U.S.-designated terror organizations, such as broadcasting a Houthis-produced music video to his audience.

Twitch has suspended Piker in the past for Terms of Service violations. However, the platform has not taken structural steps to prevent the recurrence of antisemitic language cloaked as “anti-imperialist” political commentary, a framing that exploits the ambiguity in moderation guidelines and allows hate to spread under the guise of geopolitical debate. Piker’s suspensions don’t last long, and he is currently back on Twitch with little repercussions.

Kick: Growth Driven with Zero Enforcement

Kick, another popular streaming platform, has positioned itself as a haven for personalities banned or restricted on other platforms, offering more permissive content policies and a higher revenue share for streamers. The strategy has worked: owned by Stake.com, the platform recorded 4.5 billion watch hours in 2025, making it the third most-watched streaming service globally.

Kick regularly hosts figures with documented histories of antisemitic and white nationalist rhetoric, including Sneako, Nick Fuentes, and Myron Gaines. These influencers have repeatedly peddled in Holocaust denial, conspiracies of Jewish control, and used antisemitic slurs on their platforms. The platform’s vague stance on hate speech, combined with its explicit appeal to those who feel “censored” elsewhere, creates an environment where inflammatory content is not merely tolerated but functionally incentivized. Where Twitch’s enforcement exists albeit imperfectly, Kick largely does not enforce its terms at all as a platform selling point for free speech.

Rumble: When Fringe goes Mainstream

Rumble is unique as a platform since it isn’t just used for streaming. Beyond video and broadcasting live, it allows users to host websites, allowing social media sites to be created and stored through Rumble’s network. This dual role gives Rumble an outsized footprint: it is simultaneously a content platform and the technical backbone for its users and is popular among a significant portion of right-wing digital media.

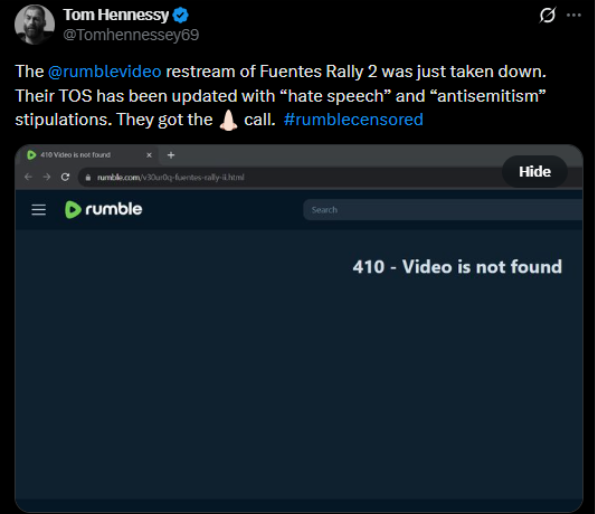

Rumble markets itself as a defender of free speech against “Big Tech censorship.” Its Terms of Service nominally prohibit hate speech and antisemitism, but enforcement is selective and inconsistent. Figures such as Andrew Tate and Nick Fuentes (both banned from mainstream platforms for inflammatory rhetoric) stream freely on Rumble. When the platform does act, it typically removes individual archived videos rather than suspending accounts, allowing bad actors to continue monetizing hate-filled content with minimal consequence. Even when Rumble tries to implement its Terms of Service and crack down on hate speech, the backlash they get as a response by their users is often filled with antisemitic, hateful messages saying that their site has been compromised by those who seek to censor their views.

This selective enforcement is itself a message: Rumble’s anti-censorship brand requires it to protect controversial personalities, even when their content crosses into antisemitism. Rumble is largely unable to exist as a site that champions itself as a safe space for those targeted by the “censorship of big tech” while also trying to actively prevent the antisemitic hate speech which got these influencers censored in the first place. The result is a platform that has successfully normalized fringe voices for a mainstream-adjacent audience.

Industry Changes: How to Protect Users from Hate Speech

Across all three platforms, a consistent pattern emerges: policies exist, but enforcement is inconsistent, easily circumvented, or nonexistent. Antisemitic content persists not because rules are absent, but because they are applied unevenly and often too late. Addressing this problem requires structural change rather than incremental adjustments.

First, platforms must adopt clearer and more explicit definitions of antisemitism within their policies, including forms that appear as coded language or political framing. Ambiguity allows harmful content to evade enforcement.

Second, enforcement must become more consistent and consequential. Repeat violations should lead to meaningful penalties, including account suspensions, rather than isolated content removal that allows behavior to continue.

Finally, transparency is essential. Platforms should clearly communicate how moderation decisions are made and applied, building trust while reducing the perception that enforcement is arbitrary or politically motivated.

Live-streaming is not inherently conducive to antisemitism, but its structure—real-time interaction, audience participation, and inconsistent moderation—creates conditions where it can thrive. Without meaningful reform, these platforms will continue to amplify harmful narratives at scale, shaping online discourse in ways that are difficult to reverse.