Since the United States and Israel launched Operation Epic Fury on February 28, 2026, we have been tracking an increasing disinformation environment on social media. During this time, many organizations, like ours, have seen an increase in misinformation and fake news on social media platforms. Artificial intelligence has emerged as a weapon in the information battlefield on social media, enabling the rapid production and distribution of false information at a scale and speed not seen before. The result has been a polluted information environment in which millions of people have been exposed to content depicting events that never happened.

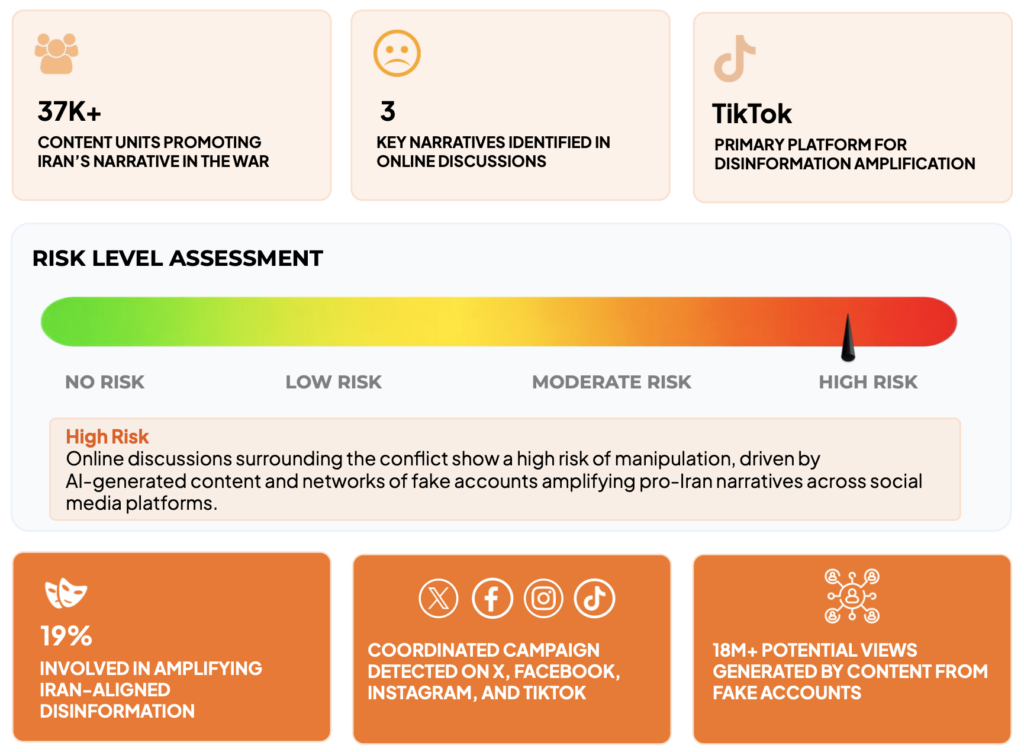

Cyabra, an Israeli social media intelligence company that uses AI-driven detection technology to identify coordinated inauthentic behavior, published a report documenting the scope of the disinformation campaign during the conflict’s first week. Analyzing activity across X, Facebook, Instagram, and TikTok between February 28 and March 7, the company identified more than 37,000 content units promoting pro-Iran narratives, generating over 145 million views and 9.4 million engagements.

The campaign was not organic. Cyabra identified clear hallmarks of centralized coordination: thousands of posts repeating identical narratives across multiple platforms, the same AI-generated videos appearing with near-identical captions across numerous accounts, fixed hashtag clusters including #standwithiran, #westandwithiran, #israelterroriststate, and #prayforiran, and synchronized burst-posting patterns. Nineteen percent of accounts amplifying Iran-aligned content were assessed as fake profiles.

High level findings from the Cyabra report:

TikTok was the dominant platform for misinformation in Cyabra’s analysis, accounting for 72% of all views — more than 105 million — followed by Facebook at 18% and X at 9.8%. However, the reach of these narratives don’t stop there. Once posted, fake accounts and AI-generated videos are amplified by real users, extending false narratives to wider audiences.

Post circulating a fake video from an account posing as an “Israeli Jew of Arab origin” to gain credibility.

The Themes: What AI Is Being Used to Fabricate

Across monitoring and reporting a consistent set of false narratives emerges — each designed to serve the same underlying propaganda objective: portraying Iran as winning the war while depicting the United States and Israel as outgunned, humiliated, or on the verge of collapse. The content is thematically coordinated, algorithmically optimized, and industrially produced.

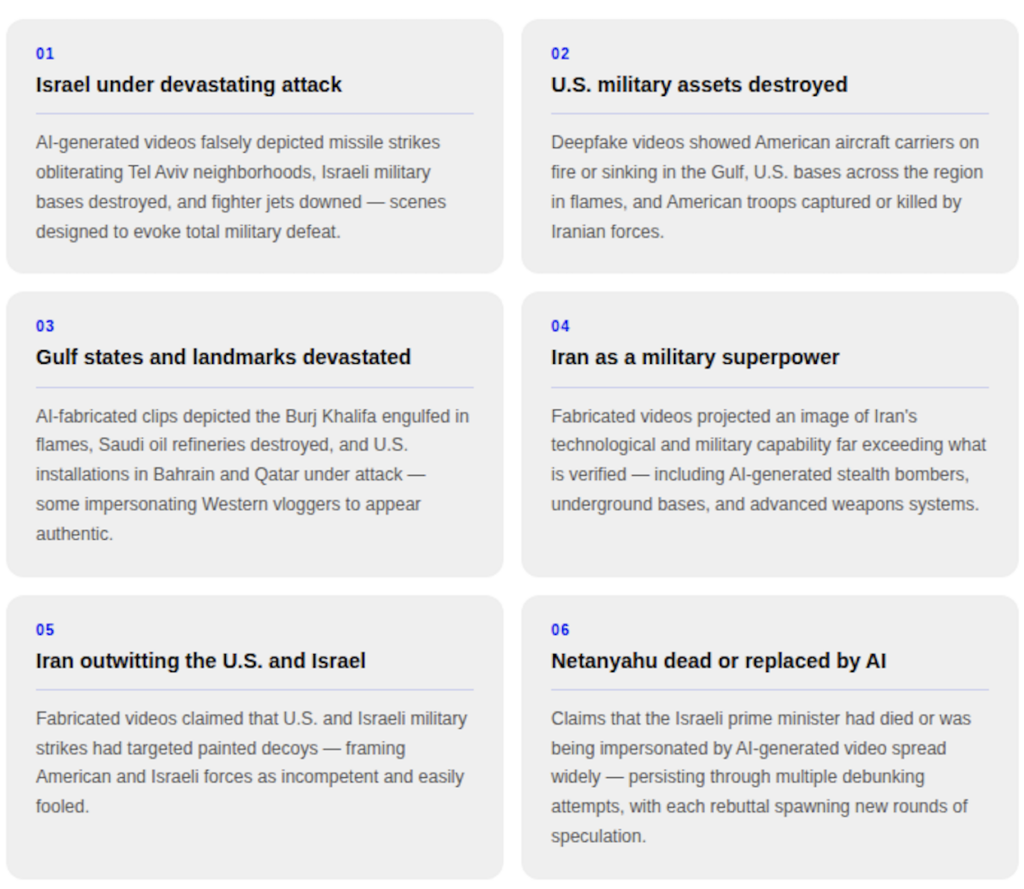

The main AI-driven disinformation narratives documented during the conflict include:

The collective impact is an information environment engineered to shift public perception — one in which Iran appears to be winning a war that by most independent accounts it is losing. By flooding social media with fabricated evidence of Iranian military dominance, the campaign aims to erode international support for the U.S.-Israeli operation, demoralize domestic audiences in both countries, and project an image of Iranian strength to global audiences who may have no other frame of reference for what is actually happening on the ground.

The Reach: BSA Command Center Analysis on X

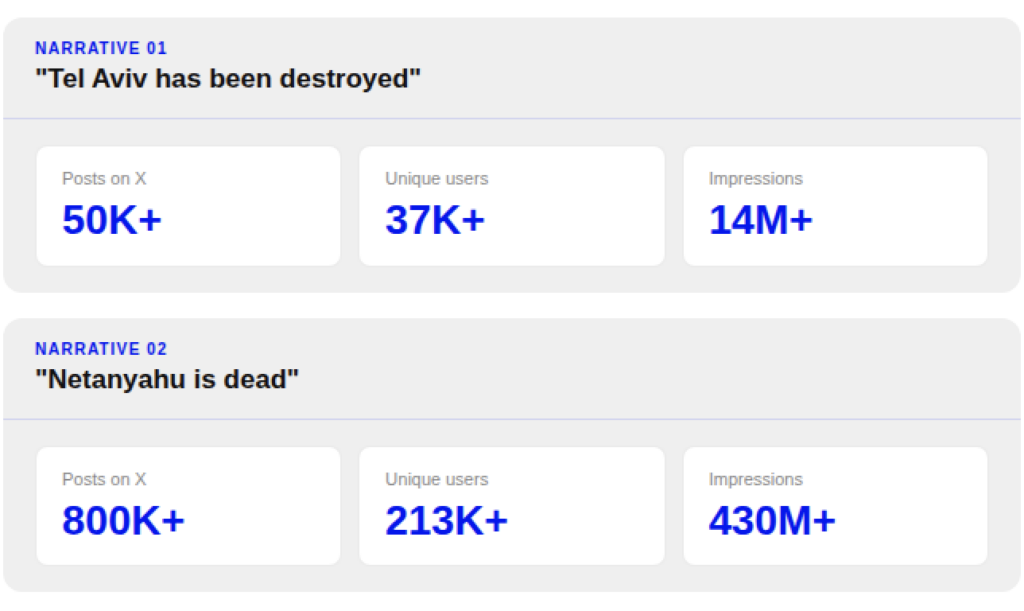

To examine how AI-generated disinformation moves beyond its original networks into he broader conversation, we analyzed two false narratives on X.

The results are significant.

The first narrative we looked at was: Tel Aviv had been destroyed or rendered unrecognizable by Iranian strikes. Between February 28th and March 19th, we tracked more than 50,000 posts from over 37,000 unique users, accumulating more than 14 million impressions on X alone.

| Fake AI picture depicting Netanyahu’s body being pulled from underneath the rubble. Post gets 18.9M views | Story gets picked up by another account and gets 17.2M views |

The second narrative we looked at was: the alleged death of Israeli Prime Minister Benjamin Netanyahu — originating in AI-generated content and deepfake analysis amplified by Iranian state media. In the same time period, we tracked over 800,000 posts from more than 213,000 unique users, accumulating more than 430 million impressions on X.

These figures reflect the broader conversation around each narrative on the platform — including those debunking, questioning, or amplifying the claims — and illustrate the degree to which a piece of fabricated AI content can seed a weeks-long, multi-hundred-million-impression information environment.

The Danger: AI and the Future of Misinformation

The Iran conflict has offered an early look at what the information environment looks like when artificial intelligence becomes a mass-production tool for disinformation — and the implications extend well beyond this war.

AI has made the spread of misinformation faster, cheaper, and more accessible than at any prior moment. A single operator with a text prompt can produce dozens of convincing fabrications in an afternoon, at near-zero cost, and distribute them to millions of people before a fact-checker has had time to respond. Social media platforms amplify the problem — built to reward engagement, they favor content that is visually dramatic and emotionally provocative over the mundane reality of authentic footage. The fabrications do not need to be believed by everyone. They only need to create enough doubt to fragment public understanding.

The reach figures documented in this report illustrate the scale of the challenge. When a false narrative reaches 430 million impressions before it can be effectively countered, the window for correction has already closed for most of the people who saw it. By the time a debunk is published and distributed, the false version has already shaped how millions of people understand events.

The burden of response cannot rest on fact-checkers alone — they operate at human speed in an environment where fabrication works at machine speed. Platforms must invest in detection, enforce labeling requirements, and ensure their own AI tools do not amplify the problem. And audiences bear responsibility too: in a conflict environment saturated with synthetic content, the habit of pausing before sharing — and treating dramatic imagery that confirms preexisting beliefs with particular scrutiny — has never mattered more.